1970 to 2020: Container technology has come a long way

What is the History of containerization in software? Let's see the history

and how it evolved after its introduction in early 1970. The containerization

was first introduced in early 1970 and it quickly evolved. When this first

container-based approach was realized, the need for an independent and unified

container environment seemed to emerge, and that's when an entirely new class of

platform-independent programming languages was born for packing app into

container for easy deployment.

Over the next several years, containerized languages evolved, with container-

and tool-based solutions emerging as the dominant solutions in terms of user

adoption. We have seen greater adoption of containers in production

environments. Many companies started using containers for deployment of their

application in production environment. The containerization technologies were

adopted by several other software communities, including server and

infrastructure software. Since the 1990s, containerization technology has

exploded, and has had a massive impact on software development. The popularity

of modern computer architectures (and most other software architectures) has

been greatly enhanced by containerization technology, as well as application

software infrastructure.

So what is containerization doing to the software development process today?

Containerization has also created an entirely new approach for developers to work, from a business perspective, with application servers running the software they produce, without having to think about them in terms of services, modules, or abstractions. Container technologies make it possible to develop applications and their containers independently, which has huge benefits for developers, but also for business.

While containerization is a valuable technological tool, it is not the most important way to design cloud-native applications. But it still has enormous potential for accelerating and accelerating development across software development paradigms. So, we will see major changes over the next few years with containerization technology and it is expected to evolve a lot in coming years.

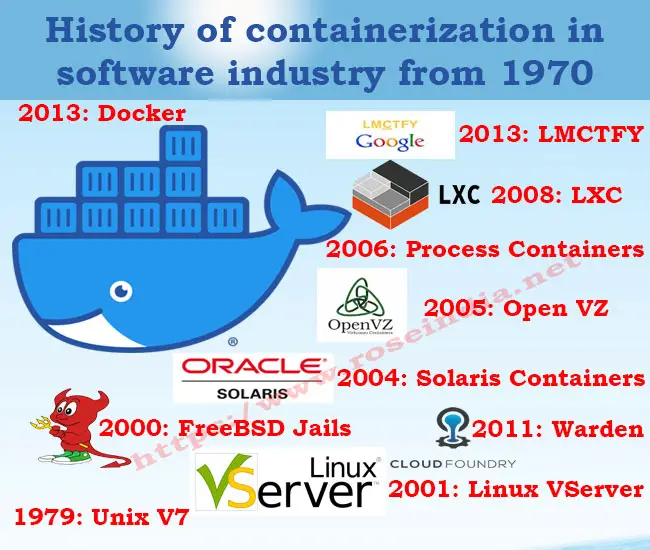

History of containerization in software industry from 1970

These days (in 2020) companies are using most advanced container technologies for deploying their applications in distributed environment which supports auto start/stop, scaling and load balancing. All the software infrastructure management activates are now well managed by highly intelligent software stacks.

1979: Unix V7

Unix V7 led to the emergence of chroot system call. This development was the starting procedure disconnection: isolating record access for each procedure. Chroot was added to BSD in 1982. As a result, it modified the root directory to a different location in the filesystem.

2000: FreeBSD Jails

It was after a grand total of 21 years that FreeBSD Jails was introduced. Despite being a little shared-environment hosting supplier, FreeBSD Jails was capable of accomplishing partition between its administrations and those of its clients for security and simplicity of management.

“Jail” here is used to describe the small, autonomous frameworks into which FreeBSD computer frameworks are segmented.

2001: Linux VServer

Out in 2001, even Linux VServer made us of Jail instrument that was capable of segmenting various types of files. Experimental patches of this technology are still available in the markets but it has not been updates since a very long time with the last reboot being released in the year of 2006.

2004: Solaris Containers

Solaris Containers was used for consolidating framework asset controls and limit partition given by zones which had the option to use highlights like depictions and cloning from ZFS.

2005: Open VZ

Also known as Open Virtuzzo, this virtualisation technology for operating systems utilizes Linux Kernel for virtualization, disengagement, administration of resources & assets and checkpointing.

2006: Process Containers

Introduced by Google un 2006, Process Containers was used for restricting, bookkeeping and segregating asset use like CPU, memory, disk I/O, network of an assortment of procedures. One year after its launch, Process Containers were renamed to "Control Groups (cgroups)". The technology converged to Linux kernel 2.6.24.

2008: LXC

Commonly referred to as LinuX Container. With its arrival, most complete usage of Linux container manager was brought into action. Made from cgroups and Linux namespaces.

LXC chips away the need of requiring any patches and does not make use of multiple Linux Kernel as only one is suffice.

2011: Warden

in 2011, a company named CloudFoundry began Warden with an aim of utilizing LXC in the beginning phases and later supplanting it with its own usage. Warden is capable of segregation of several ecosystems on any operating system. It built up a customer worker model to deal with an assortment of compartments over different hosts and Warden incorporates services to oversee cgroups, namespaces and process life cycle.

2013: LMCTFY

LMCTFY is also referred to as Let Me Contain That For You. It commenced in 2013 as an open-source form of Google's container stack giving Linux application containers. Through this technology, applications were made container aware. As a result, they started dealing with their own subcontainers.

2013: Docker

Docker is an open source stage which can be utilized to bundle, disperse and run your applications. Docker gives a simple and effective approach to exemplify applications (for example a Java web application) and any necessary framework to run that application. "Docker picture" which would then be able to be shared through a focal, shared "Docker file”. The picture would then be able to be utilized to dispatch a "Docker container" which makes the contained application accessible from the host where the Docker compartment is running.

2016: DevSecOps

The expansion brough in this field of container application led to more hazards and need for containers protection. Thus with arrival of threats like dirtyCOW, the goal of developing secure containers became an emergency. This prompted a move in security along the product improvement lifecycle, making it a key piece of each phase in application advancement otherwise called DevSecOps.

Check more tutorials at: